A version of this article first appeared in the February 2018 edition of our free newsletter, to subscribe click here

On one of our projects we have been analysing the major sub assemblies using sub assembly finite element models. Once the general design is frozen we will bring all these models together to create the global finite element model.

At that point we can carry out the loads analysis to develop the full aircraft balanced load cases, apply those loads to the aircraft model and run the cases for our final checks on the design.

There is always time pressure to get these tasks done so when we created the sub assembly models (Wing, Horizontal stabilizer, Vertical Tail) we did not take the time to idealize the structure to the correct level.

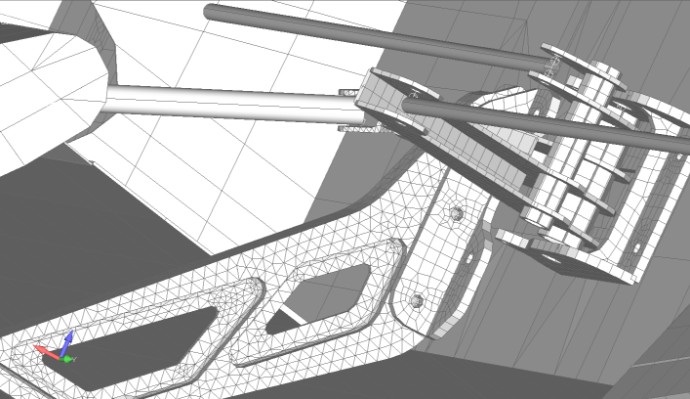

What I mean by this is modelled the main structural composite ‘boxes’ using a good idealization; laminate elements about 2in square. When it came to the attachment fittings for the control surfaces, flap and the attachment to the supporting structure we meshed those quickly using solid elements.

At the time we did this we knew that when we brought all the sub assembly models together to create the global finite element model we may have an issue with the total number of nodes and elements.

But time was paramount and we put this from our minds.

We are now working on the aircraft (or global) finite element model and when we bring in the sub assembly models we created previously we have over 700,000 nodes. For the type of aircraft and project this is and considering that we will likely end up running over 100 cases on the global model we are creating a data processing and data management headache.

Bear in mind that we are doing all of the finite element modelling, load analysis and stress analysis work with just two engineers.

As the global aircraft finite element model is our main analysis tool we need it to be quick to modify and re-run, we need speed when we post process data and we don’t need terabytes of data that we have to manage.

And this brought home the simulation truism that it is easy to modify a simple model to make it more complex but almost impossible to modify a complex model to make it more simple.

It is also true that it is easier to create a representative complex model than it is to create a representative simple model.

So now we have to go back and put the work in now to remodel areas of the structure to reduce complexity and still keep the model representative.

Maybe this does not add much additional extra cost (the original models were ‘quick’ to create anyway) but I hate the thought or redoing anything and we should have done it right first time around.

I am hoping we can limit the global finite element model to below 100,000 nodes. That will give us a model that we can run the basket of critical cases on in an hour or so and leave us with a set of data that won’t cause storage problems.

It will also makes it practical to run solutions other than just linear static on the global model.

The impacts of these aspects of modelling are difficult to account – the time and cost of dealing with excessive times to run model solutions and managing the data that complex models create is real but very difficult to quantify.

- As a side note. About 10 years ago we were working on a part 25 program for a major OEM. They sent us the global FE model to run for the work we were doing for them. We thought we could not get it to run as the solver seemed to ‘hang’ during the solution. We were running the model on a high end Dell workstation with 26GB of RAM. We discussed this with the client and they informed us that the model takes 4 to 5 days to run. It did take that long to run and produced enormous amounts of data. That work package inevitably took far longer to complete than we had planned.

The cost to simplify the global model is something that we do have to explain to the client, it is evident and is clearly accounted for – but the savings created by simplifying the model, which are large, are impossible to accurately quantify.

These problems occur because the client or manager cannot be aware of all the the nuances of the analysis work that is done. It is always difficult to justify guaranteed cost now compared to the potential for much greater additional cost later.

Comment On This Post